Models

Every agent runs on a model. The platform supports multiple LLM providers — Anthropic Claude, OpenAI GPT, Google Gemini, and others. You configure which models are available, and each agent picks one.

Why model choice matters

Models differ in:

- Quality — how good the responses are

- Speed — how fast a response comes back

- Cost — how much each response costs

- Tool use — how reliably the model picks and calls tools

- Context size — how much input the model can handle in one go

A simple FAQ agent doesn't need the most expensive model. A complex multi-step analyst agent probably does. The platform lets you mix.

Configuring models

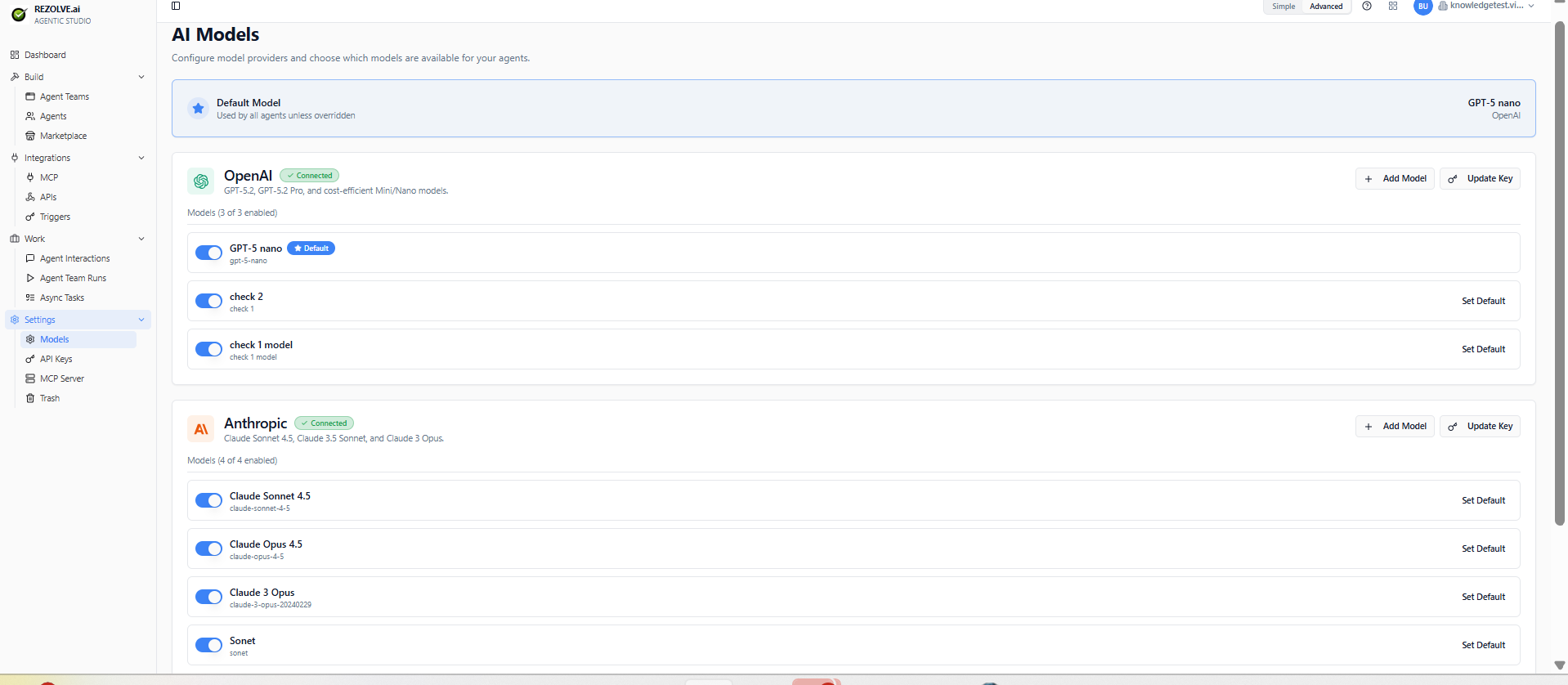

Go to Settings → Models. You'll see all models the tenant has access to, grouped by provider (OpenAI, Anthropic, etc.) with their status (Connected / Disconnected) and a default model marker.

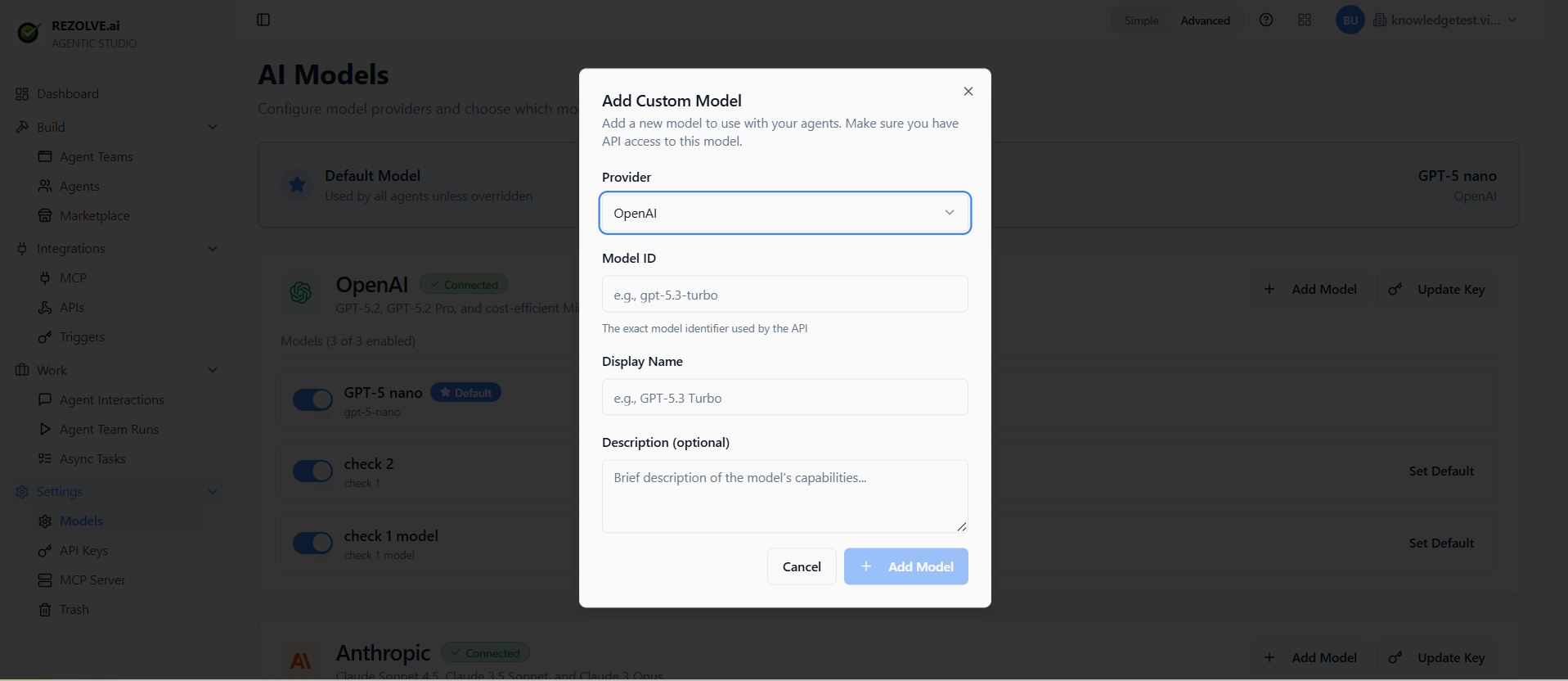

To enable a new model:

- Click Add Model next to the provider

- Enter the Model ID (the exact identifier used by the provider's API, e.g. claude-opus-4-7, gpt-5, gemini-3-pro)

- Set a friendly Display Name and optional description

- Save

Once saved, the model appears in the model dropdown when you create or edit agents.

Switching an agent's model

Open the agent, change the model in the dropdown, save. The next run uses the new model. Behaviour can shift noticeably — re-test in the sandbox before publishing the change.

Model-specific features

Some models support features others don't:

- Tool use — most modern models support it; quality varies

- Vision — accepting images as input

- Long context — 200k+ token windows

- Streaming — token-by-token output (used by public chat for responsiveness)

- Reasoning — exposing the model's chain of thought

The model picker shows which features each model supports. Pick the one that fits your agent's job.

Default model

Set a tenant default. New agents start with that model. Individual agents can still override.

Cost guard rails

Set spend caps per model and per tenant. When the cap is reached, agents using that model pause until you raise the limit or switch models.

This prevents runaway costs from a misbehaving agent or a webhook that fires too often.

Bring-your-own-key vs platform-managed

Two ways to get model access:

- Bring your own key — you provide an API key from the provider. You pay them directly.

- Platform-managed — the platform provides the model and bills you for usage. Simpler, sometimes more expensive.

You can mix per provider: BYO key for Anthropic, platform-managed for OpenAI.

Related topics

- Agents

- Monitoring — for token usage and cost tracking